If you're using a CNAME on your root domain, you're gonna have problems. That's just a DNS thing - and if you want to host a root domain on S3, you won't be provided with an IP address by AWS. You can solve this if you use Route53, but what about if you want to keep your domain in Cloudflare?

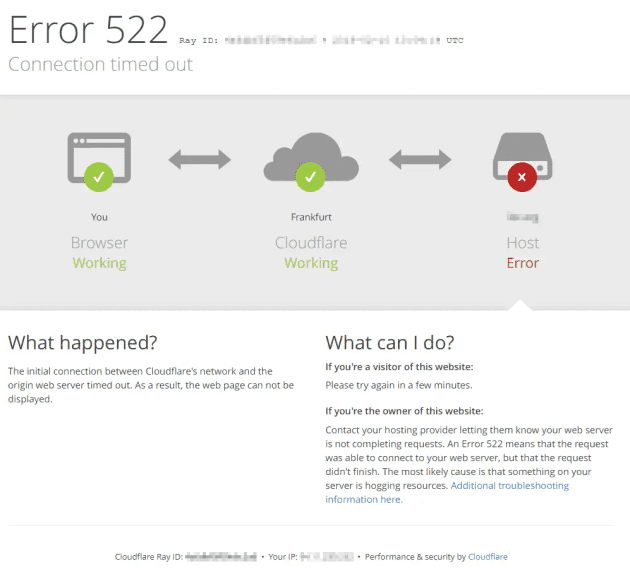

You'll also have problems if you want to use Cloudflare Full SSL on an S3 bucket configured for static website hosting - resulting in nothing but Cloudflare error 522 (Connection timed out) pages.

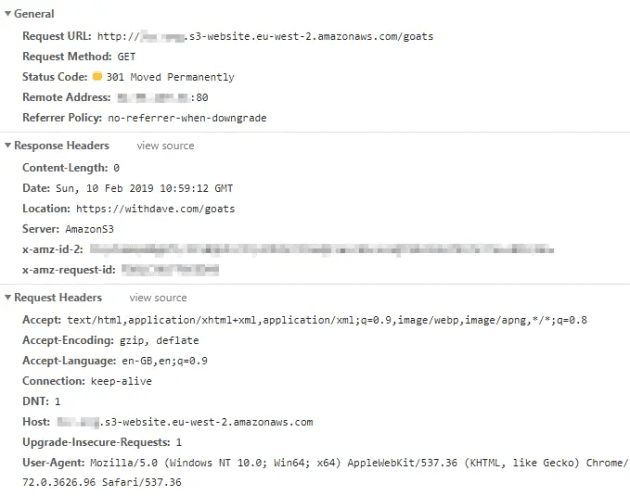

My use case is a set of simple redirects, following on from a post about 301 redirects in S3.

The easy solution to both problems is to use CloudFront to serve https requests for your bucket; but I'm going to assume that you want this solution to be as cheap as possible - and use only S3 from within the AWS ecosystem.

Let's starts with error 522 - all about HTTPS

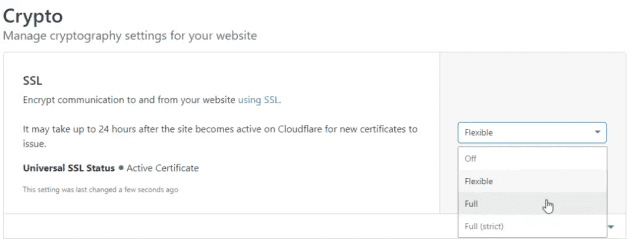

Cloudflare offers a number of different options under Site>Crypto>SSL. Most sites I work with have a valid SSL certificate on the host server, and so I can use Full (strict) SSL on the Cloudflare end - this means the connection from the host server to Cloudflare, and from Cloudflare to the user.

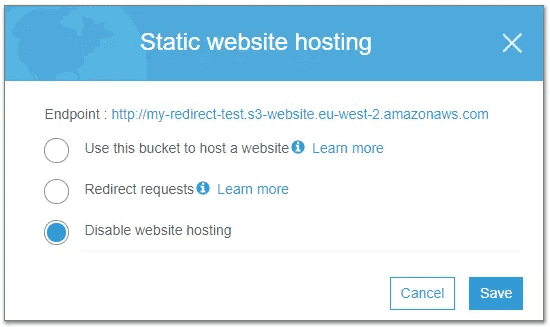

But with S3, the endpoint they provide is http by default. Historically, by changing your endpoint URL you could use https; at some point this was changed. Therefore you're stuck with the http endpoint if you've got static web hosting configured.

It also means, that if you set SSL on Cloudflare to Full or Full (strict), your users will receive Error 522 - connection timed out. You may also see Error 520 - which is a more generic error. Neither really tell you what's wrong - but it's easy to work out.

Based on an AWS help page, if you've got a bucket configured as a website endpoint, you can't use https to communicate between CloudFront and S3 either (it's not just an external thing).

This means we have two options:

- Use CloudFront to serve our content over SSL - this comes with additional cost and complexity

- Use Flexible SSL on CloudFlare - this is free, but will reduce security between the Cloudflare and AWS servers

This is explained in the help text from the Cloudflare console:

Flexible SSL: You cannot configure HTTPS support on your origin, even with a certificate that is not valid for your site. Visitors will be able to access your site over HTTPS, but connections to your origin will be made over HTTP. Note: You may encounter a redirect loop with some origin configurations.

Full SSL: Your origin supports HTTPS, but the certificate installed does not match your domain or is self-signed. Cloudflare will connect to your origin over HTTPS, but will not validate the certificate.

If you're using this bucket to do redirects only (i.e. not sending any pages or data) then the impact is lessened, although it is still a great example of how - as a user - you really can't tell what happens between servers once your request is sent.

Effectively, our request is secure up to the Cloudflare servers, and is then sent in the clear to S3. Obviously for sensitive materials this just won't do - enter CloudFront in that case. For our limited redirect requirements, the risk may be worth the reward.

CNAME on a root domain

Normally, creating a CNAME record for the root domain instead of an A record will violate the DNS specification, and can cause issues with mail delivery, etc.

If we use Route53 then we can create an A Alias record - but with Cloudflare we can create a CNAME and benefit from their CNAME flattening feature. This allows you to use a CNAME while remaining compliant with the DNS spec.

Final thought - Cloudflare page rules for caching

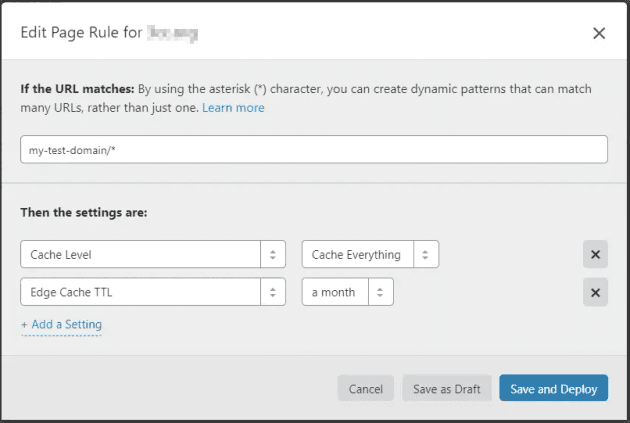

With Cloudflare, you get 3 page rules per free site. This allows you to do some clever things, like crank up the caching level to reduce the number of requests sent to S3.

In this case, I'm using S3 for 301 redirects only - so will those be cached? Cloudflare support says yes, as long as "no cache headers are provided (no Cache-Control or Expires) and the url is cacheable". To be sure, I'm going to add a page rule to catch the rest.

This rule should cache everything (Cache Level = Cache Everything), and keep that cached copy for a month before retrying the source server (Edge Cache TTL = a month). My redirects are going to rarely change, so it seems like a good choice.

Checking the response headers - no cache headers are present. I will keep an eye on the site and see whether we see an increase in cached responses.